Your A/B Test Is Counting People Who Never Saw It

A/B testing platforms bucket every visitor the moment a page loads, whether they interact with your test or not. If you're testing a mini-cart experience that only 15% of visitors ever open, the other 85% are noise in your results. We've spent years writing custom JavaScript to work around this. Optimizely's Conditional Activation replaces all of that with a dropdown.

We tore apart two hotel booking experiences. Here's what we actually look at.

When our clients ask how they compare to competitors, we don't just browse the website. We inspect what they're measuring, how they're measuring it, and what that reveals about their priorities. Here's a sneak peek how we do it.

Your A/B Test Won. But Did It Actually Win?

A mobile checkout test drove $6.2M in incremental bookings. It also killed 41,000 loyalty enrollments a year. Most optimization teams celebrate the first number and never measure the second.

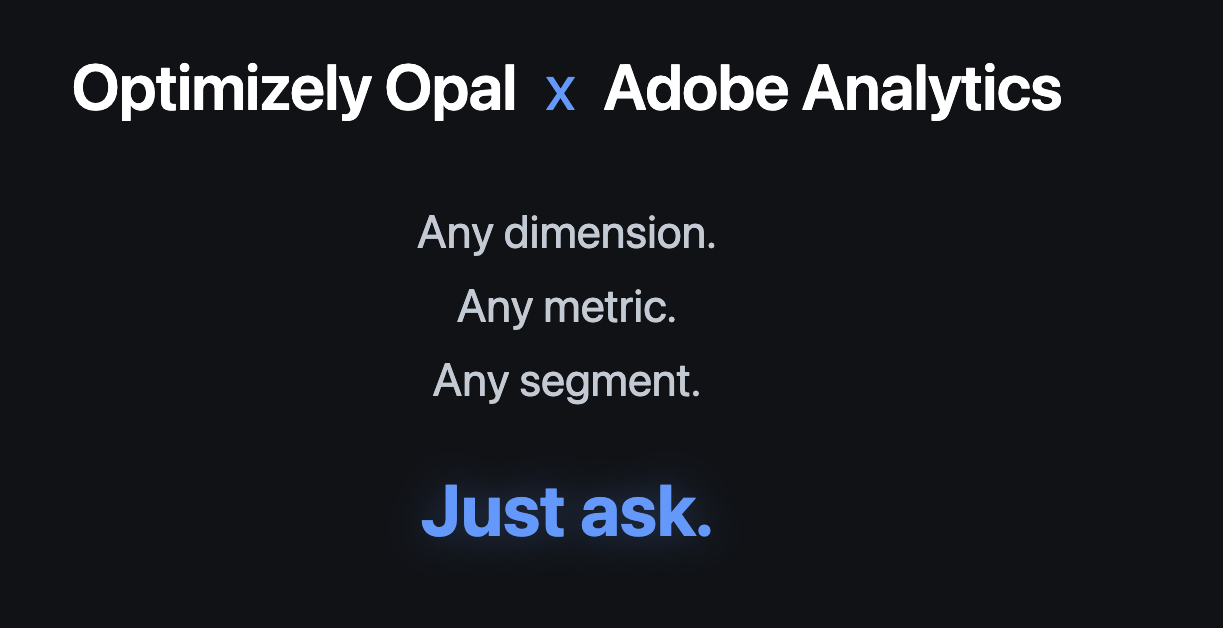

Optimizely Opal x Adobe Analytics: Ask Your Data Anything with Natural Languag

A demo of the Optimizely Opal connector for Adobe Analytics. Query any dimension, metric, or segment using plain English. No report builder, no drag-and-drop, no workspace setup. Just ask.

More A/B Tests Won't Save You

The best testing programs we've seen aren't the ones that run the most tests. They're the ones where every test has a reason, every result gets analyzed with intellectual honesty, and the people doing the work are trusted to do it well. Volume is a byproduct of maturity, not a substitute for it.

The New Compliance Challenge Hiding in Your Optimization Stack

New York became the first state to require businesses to disclose when algorithms use personal data to set prices. The law took effect November 10, 2025, survived a constitutional challenge, and is being actively enforced. California is taking a different approach through antitrust law. Multiple other states have pending legislation. This is following the exact same trajectory as GDPR → state privacy laws, creating a compliance patchwork with no federal preemption in sight.

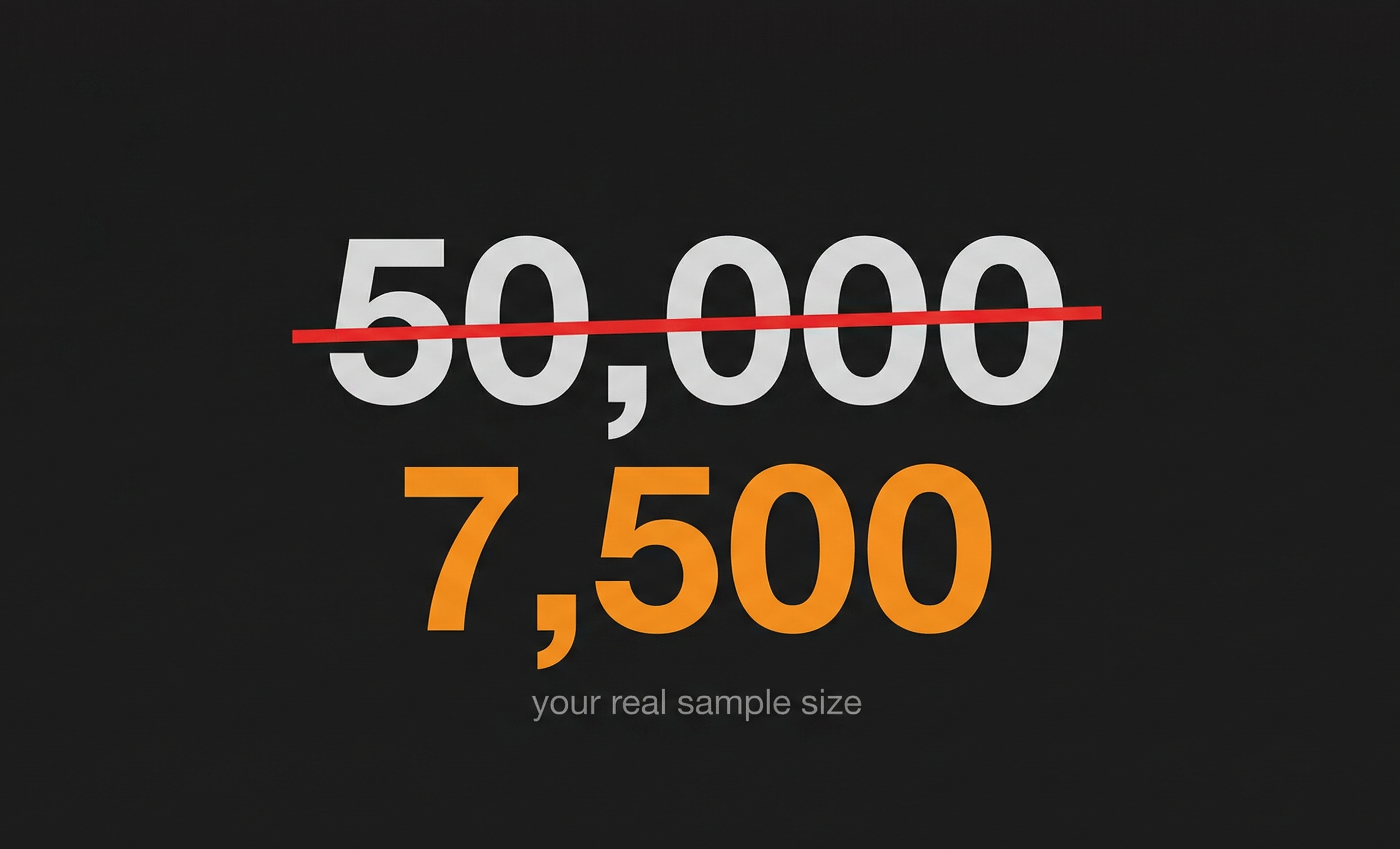

What’s the Minimum Sample Size You Need Before Trusting Your Test Results?

Learn how to calculate the right sample size to make A/B test results trustworthy without wasting time or traffic.

Building Your First Optimizely Opal Custom Tool

Optimizely Opal's Custom Tools feature lets you extend the Opal AI agent's capabilities by connecting it to your own services and APIs. In this guide, we'll walk through creating a simple custom tool from scratch, deploying it to the cloud, and integrating it with Opal.

By the end of this tutorial, you'll have a working custom tool that responds to natural language queries in Opal chat. We'll keep it straightforward, no complex integrations, just the essentials you need to get started.

What Every Optimization Team Needs to Understand About Working With AI Whether They Use It for Code or Not

This article is about code generation, but the lessons apply to everything AI does in optimization. The difference between "CSS-only" AI and "almost everything" AI isn't the model you're using. It's the infrastructure you build around it. And that infrastructure—documentation, constraints, institutional knowledge—transforms how teams work with AI on any complex task.

Why "More Data" Won't Make Your AI Smarter

With AI, the temptation to move fast is almost irresistible. You get instant outputs, the thrill of novelty, the satisfaction of "adopting AI" before your competitors. It's a quick high.

But like any quick high, it wears off fast and leads to disappointment. Three months later, you're back where you started—except now you've also eroded team confidence in AI and wasted time on generic outputs that didn't move the business forward.

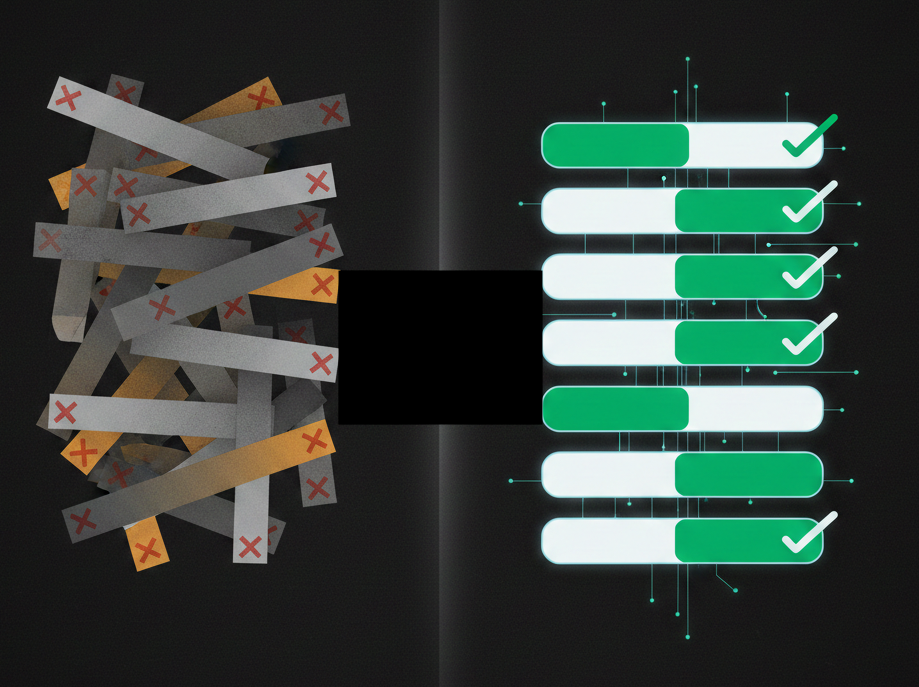

AI as a Forcing Function for Organizational Maturity

Most enterprise experimentation teams know they lack formalized process. They feel it daily in the endless prioritization debates, the ad-hoc QA checks, the experiments that launch without clear success criteria, the results that spark more questions than answers.

The Maturity Paradox: Why Small Changes Drive Big Results in Enterprise Optimization

The CRO industry has it backwards. While agencies push "big, bold changes" and platforms promise "30% conversion lifts," the most sophisticated enterprise programs are finding massive value in subtle improvements that inexperienced teams would dismiss as trivial. This isn't about settling for small wins, it's about understanding how human psychology, business maturity, and compound growth actually work at scale.

Everyone Hates Popups But……They Work!!!

Popups. Sliders. Takeovers. We all hate them. Right?

Everyone says popups suck yet sites that use popups consistently outperform sites without them.

If this is true, then why do so many sites choose not to deploy a well designed and targeted popup strategy?

How to Choose the Right AB Testing Tool: People and Process Matter

A scoring framework for helping select the best A/B testing partner for YOU.

Is Your Optimization Team GAMIFIED??

Think about how impactful it would be if employees from all levels of your organization – new hires to SVPs – were engaged and submitting test ideas. And, those employees cut across all departments. Your Optimization team will receive so many new, fresh test ideas that have never been thought of before. Also, many of these ideas will be directly tied to business objectives!

Minimalization Optimization…Say What??

Simplicity and minimalization are directly tied to consumer psychology. A site experience that overwhelms a shopper is not good for business. Not only are the chances high that they will not purchase a product, there’s also a good chance that they tell others about their experience, because as we all know bad news travels much faster than good news.

Optimization - More Than a Means to an End?

Is Optimization a means to an end or an end in and of itself? Is it good enough to optimize your “null search results” page on your site simply for the sake of making it as functional as possible for your end user? Or are you doing it to improve conversions? Does the former automatically drive the latter? Does your senior leadership ask about conversion and revenue impact for every test and discard the test if there was none?

A Product Recommendations Framework – Where Does My Team Even Start??

You’ve been tasked by your Executive Leadership Team to increase orders and average order value (AOV) over the next 12 months. You have a solid plan in place, as you’ve worked on it for months. Yet there’s something nagging at you, like there could be something important missing in your strategy and you’re not quite sure what is lacking?

Unlock the Hidden Power of Tertiary Metrics: Outperform Primary Metrics in A/B Testing for Breakthrough Results

If 25% of A/B tests run on the site result in a winner, what do we do with the other 75% of the tests that were run on the site that did not produce a winner? I like to call these tests INCONCLUSIVE AT THE PRIMARY METRIC LEVEL. I don’t like the term “losers” at all. Once you call a test a “loser,” your executive team stops listening. Trust me on this one, I see it happen all the time in meetings!

Making Sense of A/B Test Statistics

Let’s use the term Confidence Interval as a case study in how easily it is to use stories to effectively communicate difficult terms.