We Took Google's New AI Analyst for a Test Drive

Google is actively marketing Analytics Advisor, pitched as your "always-on data analyst." It's the latest in a wave of vendor AI tools prompting brands to reconsider how they staff analytics work, and in some cases, to replace humans with tools like this one. Before drawing conclusions, we ran it through four kinds of questions against real enterprise GA data: reporting, diagnostic, strategic, and anomaly. The results were more nuanced than the marketing suggests.

Your A/B Test Is Counting People Who Never Saw It

A/B testing platforms bucket every visitor the moment a page loads, whether they interact with your test or not. If you're testing a mini-cart experience that only 15% of visitors ever open, the other 85% are noise in your results. We've spent years writing custom JavaScript to work around this. Optimizely's Conditional Activation replaces all of that with a dropdown.

We tore apart two hotel booking experiences. Here's what we actually look at.

When our clients ask how they compare to competitors, we don't just browse the website. We inspect what they're measuring, how they're measuring it, and what that reveals about their priorities. Here's a sneak peek how we do it.

Your A/B Test Won. But Did It Actually Win?

A mobile checkout test drove $6.2M in incremental bookings. It also killed 41,000 loyalty enrollments a year. Most optimization teams celebrate the first number and never measure the second.

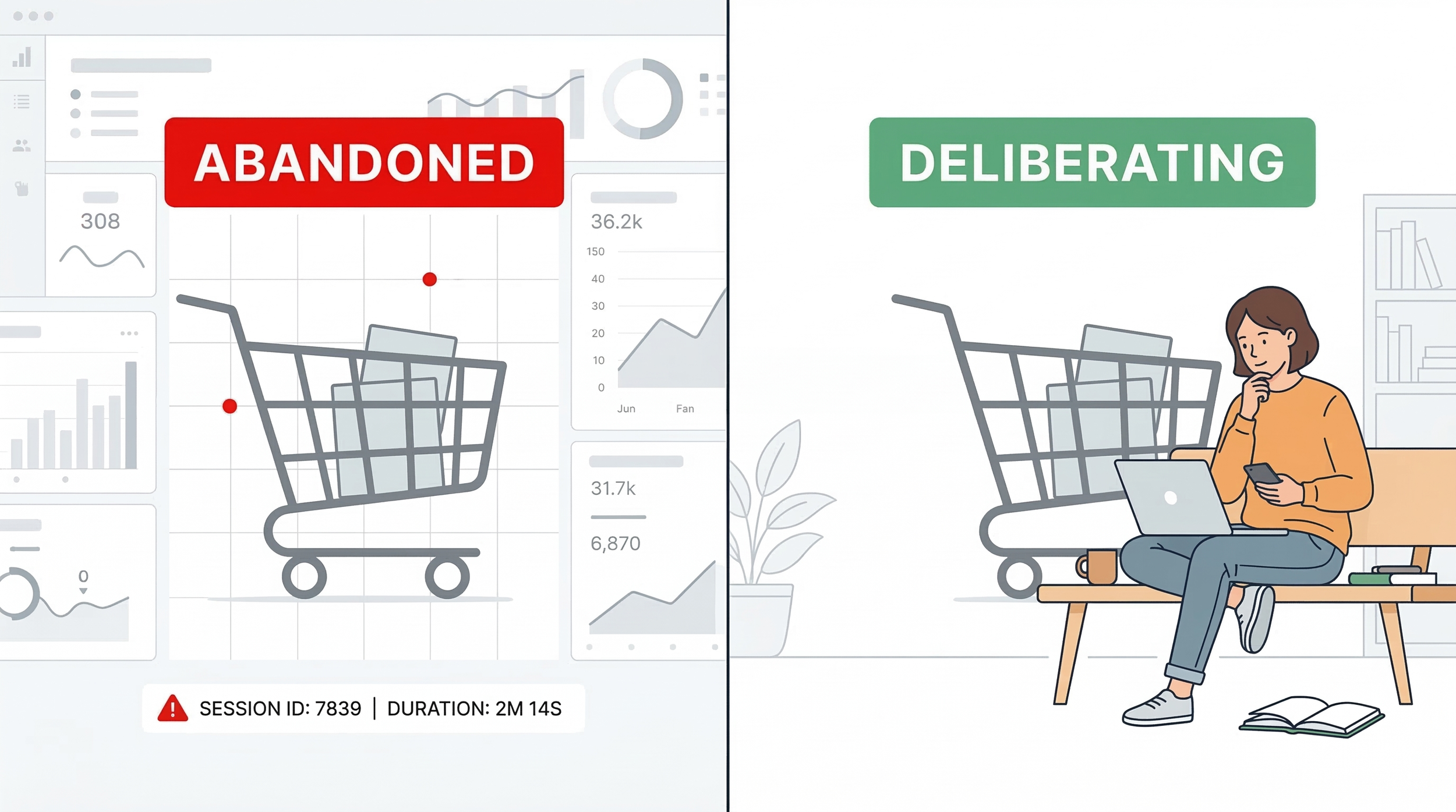

Your Customers Aren't Abandoning Their Cart — They're Thinking

Cart abandonment rate hides three completely different behaviors. The analysts who split them apart are the ones who find something actionable.

Your Data Team Structure is Probably Wrong

There are two dominant philosophies for structuring data teams: centralized and distributed. Both are wrong. Centralized teams have the expertise but lack business context. Distributed teams have proximity but lack leadership, governance, and consistent data. The answer is a hybrid, centralized expertise with embedded liaisons in the business units you serve. Here's why, and how to rank the three models.

Optimizely Opal x Adobe Analytics: Ask Your Data Anything with Natural Languag

A demo of the Optimizely Opal connector for Adobe Analytics. Query any dimension, metric, or segment using plain English. No report builder, no drag-and-drop, no workspace setup. Just ask.

What If Your Optimization Team Could Answer Their Own Data Questions?

The organizations that will lead over the next few years aren't the ones with the best point solutions. They're the ones that connect their tools into a system where data flows to wherever decisions are being made — without requiring a human intermediary for every question.

More A/B Tests Won't Save You

The best testing programs we've seen aren't the ones that run the most tests. They're the ones where every test has a reason, every result gets analyzed with intellectual honesty, and the people doing the work are trusted to do it well. Volume is a byproduct of maturity, not a substitute for it.

Under the Hood at 33 Sticks: What Four Months Taught Me About Data, Strategy, and What Really Matters

I was hired without a CV. I learned that 2-hour meetings are a scam. And I discovered that being called 'difficult' often just means refusing to shrink into systems that weren't built for real innovation. A reflection on four months that permanently raised my standards for how I want to work.

Data Theater: What McKinsey's AI Report Actually Says (And What LinkedIn Gurus Won't Tell You)

McKinsey's latest AI research is everywhere on LinkedIn: "57% of jobs automated! $2.9 trillion opportunity!" But the viral posts are cherry-picking stats and stripping context. We read the actual 60-page report. Here's what decision-makers need to know instead.

The New Compliance Challenge Hiding in Your Optimization Stack

New York became the first state to require businesses to disclose when algorithms use personal data to set prices. The law took effect November 10, 2025, survived a constitutional challenge, and is being actively enforced. California is taking a different approach through antitrust law. Multiple other states have pending legislation. This is following the exact same trajectory as GDPR → state privacy laws, creating a compliance patchwork with no federal preemption in sight.

We Asked 3 LLMs to Build a Data Viz. Here's What Happened.

If you've been on LinkedIn lately, you've probably seen your fair share of posts about how "AI will replace your analysts!"

"ChatGPT just made my whole data team obsolete!"

We got curious. Not about whether AI can theoretically do analysis, we know it can be a very useful tool, but whether it can deliver an actual work product. The kind of thing a marketing director might hand to their exec team. So we ran a simple test.

How to spot when someone cherry-picks data to tell you what they want you to believe.

We seen it used on several Subreddits, it made rounds on Facebook, and of course it has been shared ad nauseam by LinkedIn thought-leaders. But do the charts, as one poster on LinkedIn put it, make it obvious that we are not in a bubble?

The charts seems legit, although they have been so overshared at this point the pixel quality has clearly degraded. The numbers seem authoritative. The argument feels data-driven.

But is it obvious?

Let's break down exactly what's going on here, and more importantly, how you can spot similar red flags in any analysis you encounter.

The claim.

“If this were a bubble, we'd see massive cash burn and unsustainable growth. But the numbers show real adoption, real revenue, and real productivity. What matters is net profit growth, something that frankly wasn't there at all in the dot com bubble. This is what separates AI from past 'bubbles'."

The Map That Never Dies: Why This Misleading Electoral Visualization Still Works in 2025

The White House shared a viral electoral map that's been debunked for a decade. Here's why geographic county maps systematically misrepresent election results and why they'll never die.

How to Spot Bad Data: A Case Study in Corporate Research Theater

The viral Empower survey about Gen Z salaries? Methodologically worthless. Learn the 7 red flags to spot bad data and protect yourself from misleading research.

How to Prioritize A/B Tests: A Simple Framework for Choosing What to Run First

Learn how to prioritize A/B tests using the ICE framework. Score tests on impact, confidence, and ease to build a strategic testing roadmap that drives results.

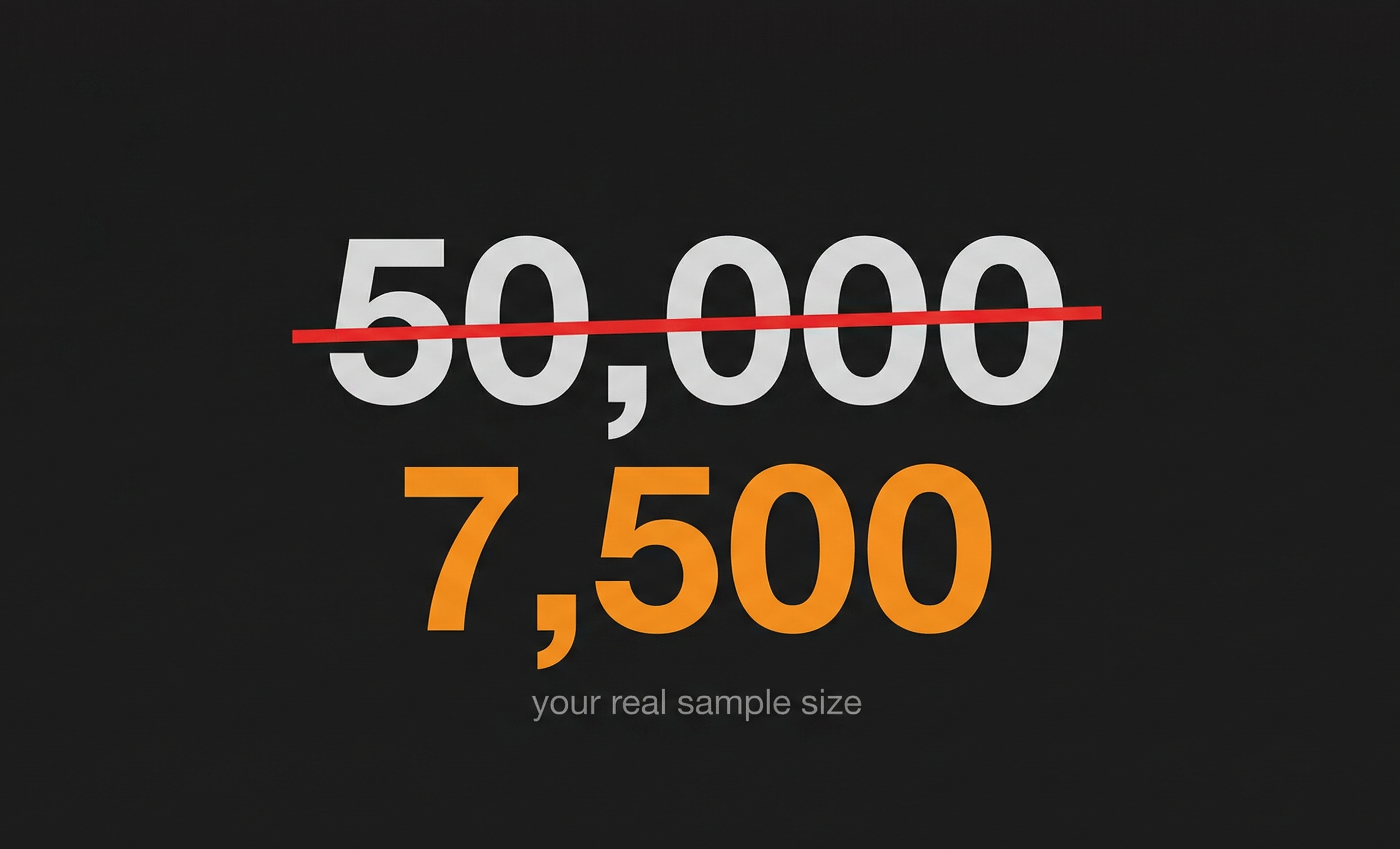

What’s the Minimum Sample Size You Need Before Trusting Your Test Results?

Learn how to calculate the right sample size to make A/B test results trustworthy without wasting time or traffic.

Building Your First Optimizely Opal Custom Tool

Optimizely Opal's Custom Tools feature lets you extend the Opal AI agent's capabilities by connecting it to your own services and APIs. In this guide, we'll walk through creating a simple custom tool from scratch, deploying it to the cloud, and integrating it with Opal.

By the end of this tutorial, you'll have a working custom tool that responds to natural language queries in Opal chat. We'll keep it straightforward, no complex integrations, just the essentials you need to get started.

What Every Optimization Team Needs to Understand About Working With AI Whether They Use It for Code or Not

This article is about code generation, but the lessons apply to everything AI does in optimization. The difference between "CSS-only" AI and "almost everything" AI isn't the model you're using. It's the infrastructure you build around it. And that infrastructure—documentation, constraints, institutional knowledge—transforms how teams work with AI on any complex task.